Keynote from Holger Krekel - towards a more effective, decentralized web

Holger started out telling us that for about a year he’s been talking to many people

all around the technology scene. Not only in a single community but, across communities,

to people that are interested in solutions, not implementations.

Since 1969, we’ve seen a stagnation of papers being written, he used that as a sign for

achievements of mankind is slowing down.

Specifically, back in the day, the guys used to do rocket science, did actual rocket science.

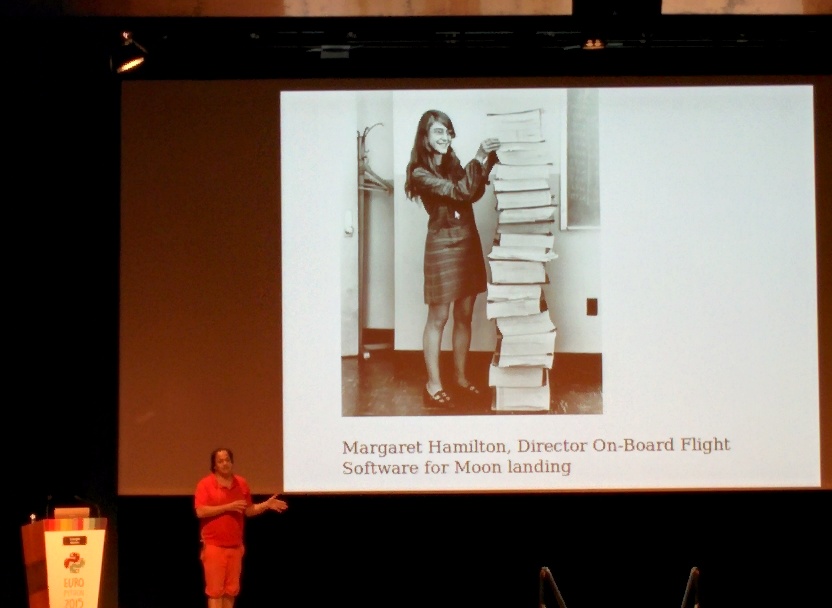

For example; Margaret Hamilton, she build the control code for the Apollo mission that went to the moon. Interestingly, the percentage of females working in technology in 1969 was much greater than nowadays. Unfortunately, this changed with the PC industry getting more successful and being specifically marketed to target males.

But, lets back up a little bit.

Where does the internet and everything we do come from?

In 1936, this telephone used pulse dialing to switch cables all the way

to the other endpoint.

Later, in 1974, packet switching with TCP/IP was introduced and we moved from cable switching

via pulse to data packet switching.

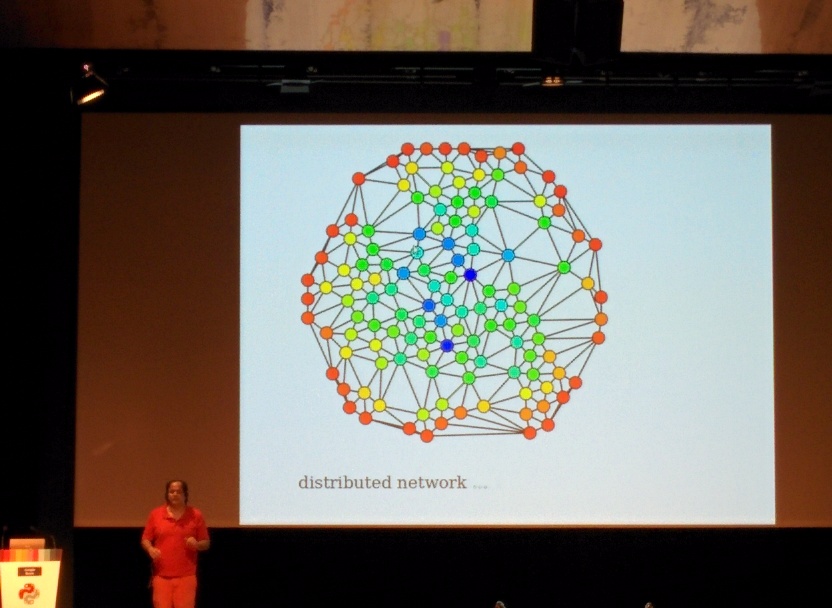

The advantages of this method were obvious; no more setup cost, the line was already established;

being able to route around nodes; and the idea that many individual nodes will comprise a

large free network and freely share information.

Unfortunately, this was a little bit of a hippy dream.

What actually happened, is lots of star networks accessed by individuals; some stars are bigger than others.

A very obvious and easy example for a big star is the one which helps you find websites. Another example for a big star tries to help you stay in touch with your friends from University.

This is about 2009.

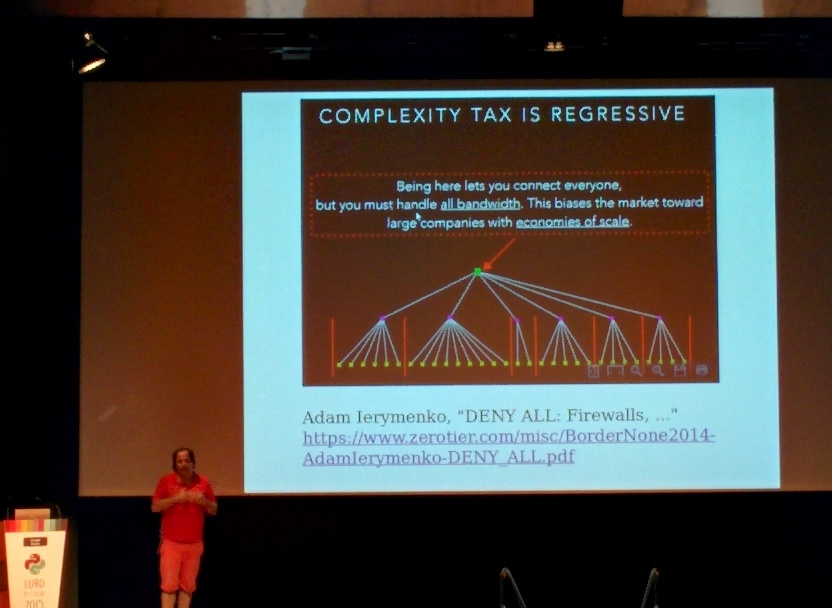

This development allowed the bigger stars, the ones being accessed countless times, to track who’s calling and what they’re looking for.

They understood quickly that they’re able to make money out of this knowledge in various ways. Additionally, the setup and operational complexity doesn’t increase per person. This in simple terms means, that after the first million users they acquire the income grows consistently per user but the cost gets lower and lower.

The best minds in IT are focusing on how to make people click more ads and unfortunately not on how to build better rockets.

This is called the “million to one” architecture, “big data” computing large amounts of data from human interaction.

A famous exception is, Elon Musk, who aims to get us to Mars by 2026. Now, this spawns the question:

Does TCP/IP still work on Mars? - No. Sure, we can rebuild a Mars version of our current infrastructure but what we’ll really need is a different way to connect all together.

This is already happening, in the IoT (Internet of Things) space, however, most of the protocols are proprietary.

Devices interconnect directly with each other since uploading to somewhere in California would be

an unnecessary waste of bandwidth; (direct links).

One nice initiative for this is offlinefirst.org applications written in a way to prefer local or offline first storage/state and sync when they go online.

To ensure that the sync works as expected you need to distribute data in a secure way.

More recent examples of technologies that allow us to do that are: Git, bittorrent, ZFS, Bitcoin, Tahoe-LAFS, Cassandra and Riak.

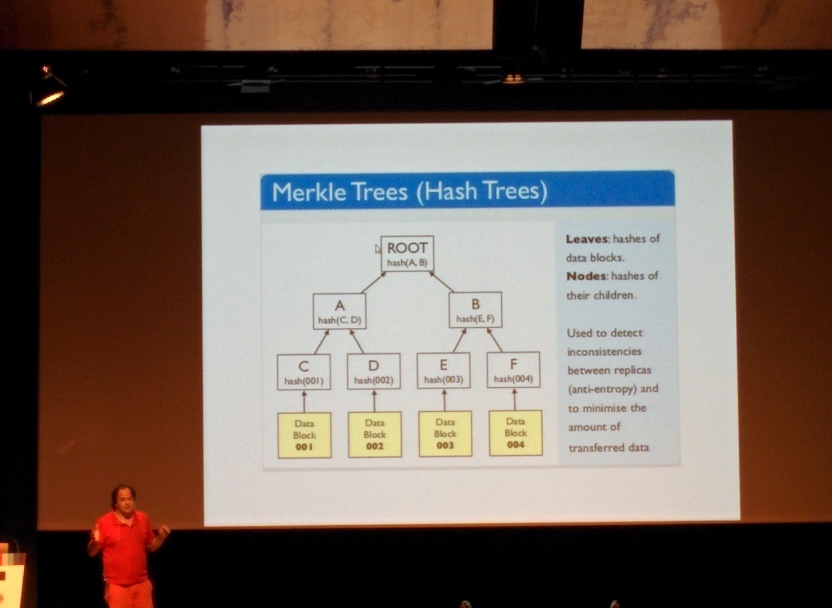

Interestingly, all these are based on Merkel Trees.

A Merkel Tree is a one way computation that allows to be used as a distributed hash table. All the previously presented

implementations are datastores and databases.

We’ve not used DHT’s to implement a web protocol.

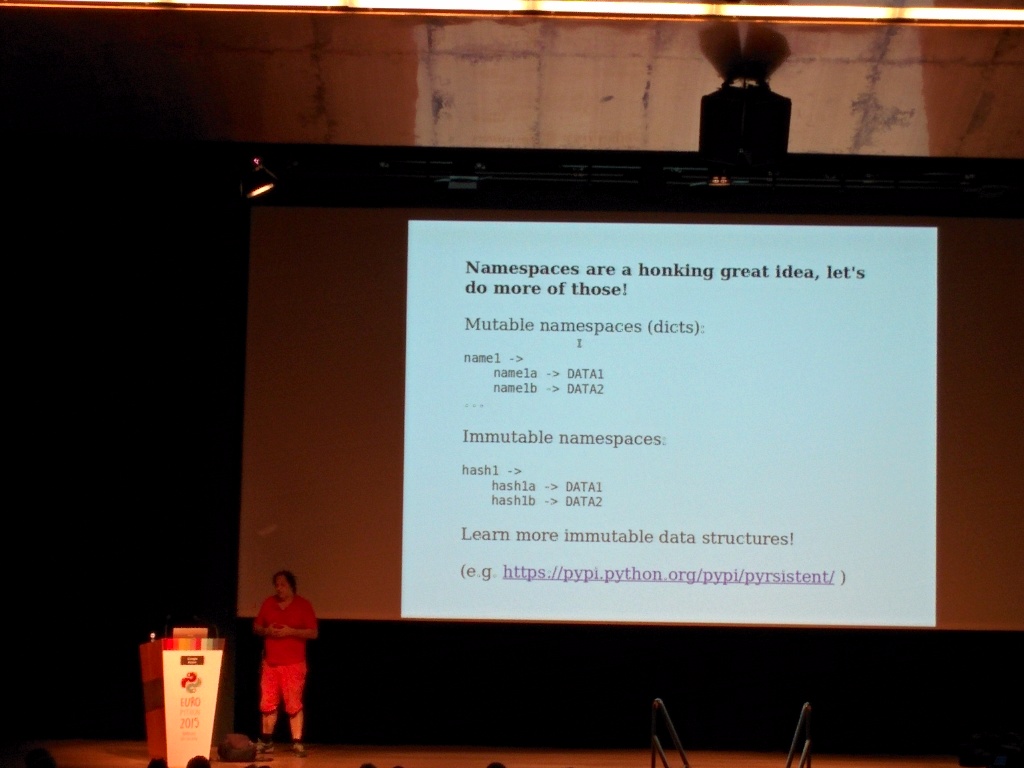

In recent history, more and more programmers have used immutability to allow them to reason about programs better. Immutability helps to ensure correctness not only on mulit-threaded machines but also in massively distributed systems.

Examples of languages and implementations are: Haskell, Scala, Clojure, Immutable.JS and Pyrsistent.

One last concept to discuss before we move on are Namespaces.

“Namespaces are a honking great idea, lets do more of those!” (Zen of Python, Tim Peters)

Namespaces allow programmers to address individual functionality or data in a more organized and obvious way.

Say you're in England. You hear someone talking about "boots".

You think of what Americans call "trunks". That's namespaces.

The idea of namespaces are that you give things names, and then package up

those names in something called a namespace. And then, down the road, you

open up that package and start referring to those things by name again.

(http://tech.jonathangardner.net/wiki/Namespace)

If one combines all the above concepts, you’ll arrive at the need for a massively scalable distributed information exchange system that uses namespaces to address immutable data, the Interplanetary Filesystem.

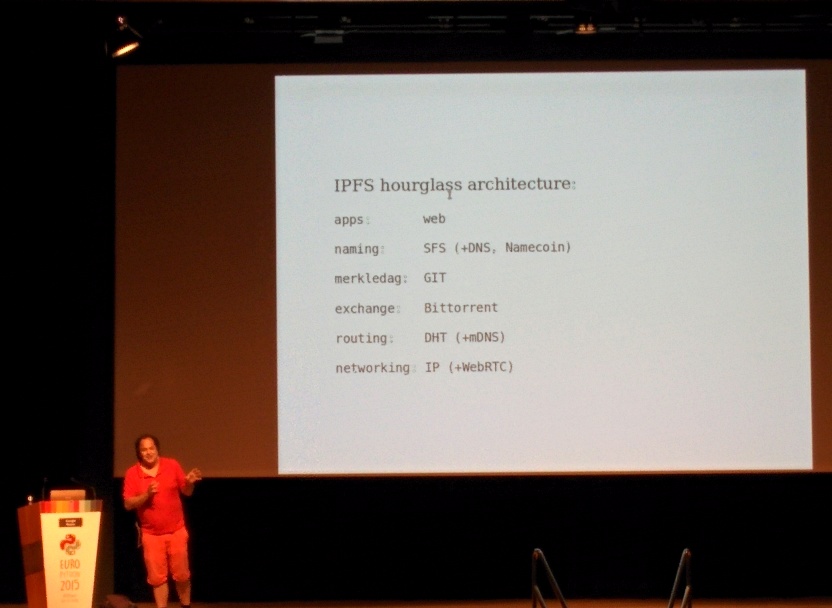

IPFS - the Interplanetary Filesystem

The IPFS is exactly that, a distributed immutable merkel tree of data that can be addressed directly.

In comparison to http, the content is being fetched based on a merkel tree address hash that references data not the address of another computer.

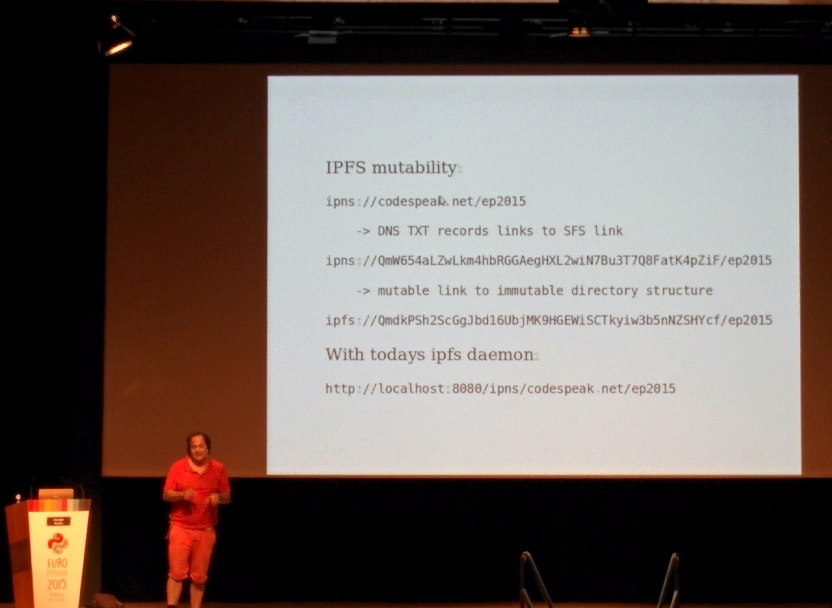

These hashes can be quite complicated, hence IPFS uses DNS for easy discovery.

Currently the system is in development, but for now we’d be using it as follows:

ipns://example.com/ep2015 -> using DNS resolves to

ipns://<hash value>/ep2015 -> using a special lookup resolves to

ipfs://<data hash value>/ep2015 (the actual address of your specific content)

The naming is done via a self-certified distributed entity.

The actual data transfer is accomplished via bittorrent-ish data transfer (the distributed hash table) and the networking is simply IP based, because it works.

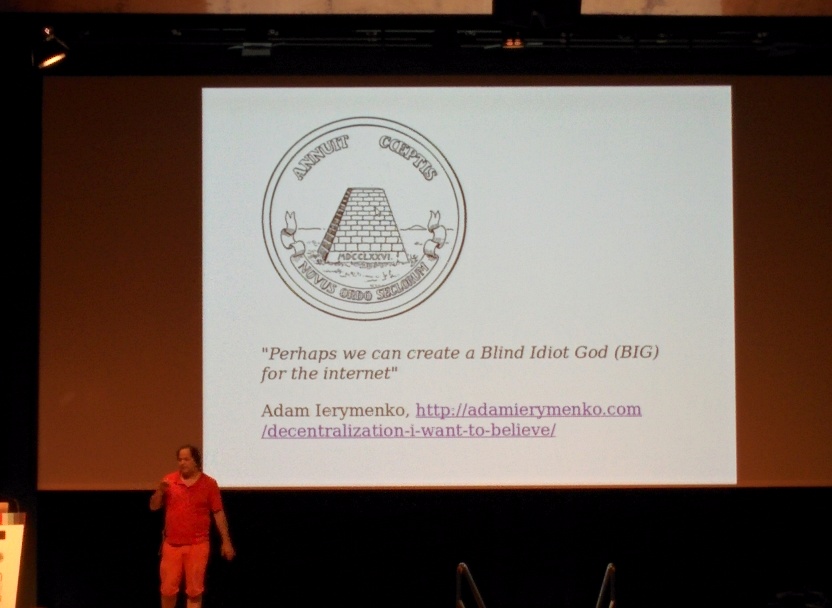

The Blind Idiot God

Unfortunately, there is a problem within the discovery phase. If everything is distributed and nodes go randomly online and offline; how does one ensure that a new joiner or a rejoiner sees the actual reality as it is when coming online.

In current implementations this is achieved via stable nodes, they have knowledge about the majority of the tree or even own a full tree snapshot. This is a problem, how can we trust them, they might lie to us.

The open question is therefore, how can we fix this?

How can we give updates of the merkel tree to new joiners/connectors?

Currently, we’ve got one gateway at: gateway.ipfs.io and the current version is implemented in golang. We’re also not enforcing any kind of versioning, simply publishing a new version and referencing that, would make the old one go away.